karsus

Pair

We have been running a League for four years now and are looking to evaluate the final table structure for the upcoming season.

The current approach is as follows:

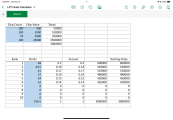

Looking at the last four years we have the following data points:

The current approach is as follows:

- Points accumulated throughout 12 monthly 'regular' season games

- Top 10 of regular season points earners make the final table

- Chip stack sizes are determined by point total (400 BB to the average point total, adjusted up or down based on that players actual points)

- Final table pays out to the top 4

- Players for the final table still buy in (all funds going directly to the prize pool) at 2x the normal monthly buyin

- Blinds structure is 15 min levels with % increases between alternating between 33% and 25% increases

- Disparity between biggest stack to smallest has typically had the smallest stack ~40% of the biggest stack

- Smallest stack has been ~250 BB or greater; largest stack typically ranges around 500-600BB

- In all but 1 year, largest stack was the overall winner; however, places 2-4 were distributed across the rest of the field.

- While challenging, it is possible (and has happened once) where a player only made three of the twelve games and made the final table (in position 10)

- We have players that join late season, and want them to feel that they have a valid chance at not just making the final table -- but also being competitive

- There is a perception that if you are in slots 6-10 (typically 50% of big stack) that you don't have a chance (note that the data doesn't necessarily support that perception)

- Potential issue of the best players being given an even greater advantage (typically the better players are the highest points earners and thus gain additional advantage over the weaker players at the final table)

- Do nothing, keep it the same and try to address any perception concerns

- Use points to determine ranking, but then control the starting stack variance to a fixed percentage (smallest is 60-70% of big stack?)

- Use the existing points structure but leverage a 'smoothing' algorithm to reduce the variance in stack sizes

- Other thoughts??

Last edited: